Self-Evaluation and Learning - Where is the Reset Button?

How to unleash the power of self-evaluation?

How to unleash the power of self-evaluation?

By: Rasmus Heltberg

The World Bank began doing self-evaluations of completed loans projects 40 years ago because President McNamara wanted to know the results of the Bank’s investments. In a previous blog, co-authored with Caroline Heider, we described why there is little organizational learning flowing from the systems.

This blog is about how to unleash the power of self-evaluation, building on a new IEG evaluation. It explores how the World Bank Group can move from a compliance mindset to a results and learning orientation when it comes to self-evaluation.

Tweaks to templates, training, and processes will not suffice. The fix will have to address behaviors—specifically, three causes of misaligned incentives (see figure 1) around self-evaluations will have to corrected.

Figure 1: A Map of Complex Incentives in Three Areas

First, the incentives and managerial signals surrounding self-evaluation need a reset. Bank Management has launched a process to reform the Implementation Completion Report for Bank investment. This is an opportunity to reform templates, processes, and ratings guidelines for project self-evaluation to reduce barriers to innovation, experimentation, and learning. For example, one way to improve the perceived fairness of the rating system would be to better account for unpredictable disasters, conflict, and economic crises that adversely affect project development outcomes.

Second, results and evaluative evidence must begin to count. Managers could tone down the attention to outcome ratings and the disconnect with IEG, and instead send signals that learning, performance, and creating results for clients is what counts. Self-evaluations that are not used send a powerful signal that they do not matter. WBG managers and board members could make more use of knowledge from self-evaluation, and regular learning, strategic, and planning processes could be infused with evaluative evidence, thereby sending a signal to staff about the value of results data.

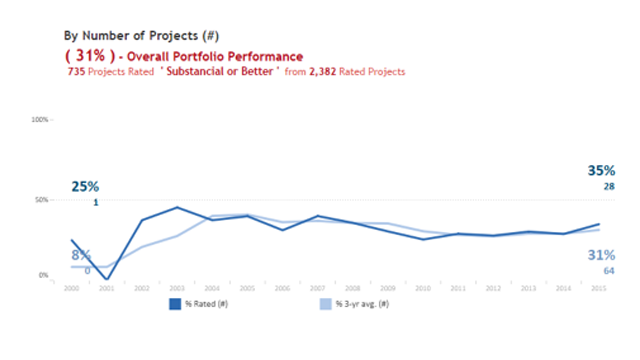

The quality of M&E must improve. IEG rates the quality of World Bank M&E at around 30% satisfactory—a figure that hasn’t budged in years (figure 2). A Results Measurement and Evidence Stream has been set up to improve M&E skills across the WBG but more is needed.

Figure 2: M&E Ratings not Improving Over Time

We could make much more use of dialogue formats and deliberative meetings. Teams need safe spaces--shielded from public scrutiny—where they can reflect on data and evidence, solve problems, and generate lessons.

Third, more selective and voluntary approaches would help self-evaluations become more valuable. Right now, the systems prescribe what to self-evaluate (mostly projects) and how and when to do so. In addition, managers and teams could commission evaluations (retrospectives, impact evaluations, process evaluations, and so on) on topics where they see a need to learn more—using whatever scope and methodology best fits the need.

Impact evaluations are voluntary and should remain so. We could, however, be more systematic in what impact evaluations are commissioned and how we go about incorporating their findings into operational design.

How will we know that the system works? Chess players have a personal rating that is updated after each game and eagerly scrutinized. In development, there is nothing similar and I don’t think it can be devised. In the WBG, we will know that our system works when staff and managers say that they use it and learn from it and when IEG evaluations point to new and different mistakes and missed opportunities rather than the same tired old ones.

Add new comment