TWEET THIS:

In Part 1 of this series we argued that, while applications of big data and data analytics are expanding rapidly in many areas of development programs, evaluators have been slow to adopt these applications. We predicted that one possible future scenario could be that evaluation may no longer be considered as a separate function, and that it may be treated as one of the outputs of the integrated information systems that will gradually be adopted by many development agencies. Furthermore, many evaluations will use data analytics approaches, rather than conventional evaluation designs.

Here, in Part 2 we identify some of the reasons why development evaluators have been slow to adopt big data analytics and we propose some promising approaches for building bridges between evaluators and data analysts.

Why have evaluators been slow to adopt big data analytics?

Why have evaluators been slow to adopt big data analytics?

Caroline Heider at the World Bank Independent Evaluation Group identifies four sets of data collection-related challenges affecting the adoption of new technologies by evaluators: ethics, governance, biases (potentially amplified through the use of ICT), and capacity.

We also see:

1. Weak institutional linkages. Over the past few years some development agencies have created data centers to explore ways to exploit new information technologies. These centers are mainly staffed by people with a background in data science or statistics and the institutional links to the agency’s evaluation office are often weak.

2. Many evaluators have limited familiarity with big data/analytics. Evaluation training programs tend to only present conventional experimental, quasi-experimental and mixed-methods/qualitative designs. They usually do not cover smart data analytics (see Part 1 of this blog). Similarly, many data scientists do not have a background in conventional evaluation methodology (though there are of course exceptions).

3. Methodological differences. Many big data approaches do not conform to the basic principles that underpin conventional program evaluation, for example:

- Data quality: real-time big data provides one of the potentially most powerful sources of data for development programs. Among other things, real-time data can provide early warning signals of potential diseases (e.g. Google Flu), ethnic tension, drought and poverty (Meier 2015). However, when an evaluator asks if the data is biased or of poor quality, the data analyst may respond “Sure the data is biased (e.g. only captured from mobile phone users or twitter feeds) and it may be of poor quality. All data is biased and usually of poor quality, but it does not matter because tomorrow we will have new data.” This reflects the very different kinds of data that evaluators and data analysts typically work with, and the difference can be explained, but a statement such as the above can create the impression that data analysts do not take issues of bias and data quality very seriously.

- Data mining: Many data analytics methods are based on the mining of large data sets to identify patterns of correlation, which are then built into predictive models, normally using Bayesian statistics. Many evaluators frown on data mining due to its potentially identifying spurious associations.

- The role of theory: Most (but not all) evaluators believe that an evaluation design should be based on a theoretical framework (theory of change or program theory) that hypothesizes the processes through which the intended outcomes will be achieved. In contrast, there is plenty of debate among data analysts concerning the role of theory, and whether it is necessary at all. Some even go as far as to claim that data analytics means “the end of theory”(Anderson 2008). This, combined with data mining, creates the impression among some evaluators that data analytics uses whatever data is easily accessible with no theoretical framework to guide the selection of evaluation questions as to assess the adequacy of available data.

- Experimental designs versus predictive analytics: Most quantitative evaluation designs are based on an experimental or quasi-experimental design using a pretest/posttest comparison group design. Given the high cost of data collection, statistical power calculations are frequently used to estimate the minimum size of sample required to ensure a certain level of statistical significance. Usually this means that analysis can only be conducted on the total sample, as sample size does not permit statistical significance testing for sub-samples. In contrast, predictive analytics usually employ Bayesian probability models. Due to the low cost of data collection and analysis, it is usually possible to conduct the analysis on the total population (rather than a sample), so that disaggregated analysis can be conducted to compare sub-populations, and often (particularly when also using machine learning) to compute outcome probabilities for individual subjects. There continues to be heated debates concerning the merits of each approach, and there has been much less discussion of how experimental and predictive analytics approaches could complement each other.

4. Ethical and political concerns: Many evaluators also have concerns about who designs and markets big data apps and who benefits financially. Many commercial agencies collect data on low income populations (for example their consumption patterns) which may then be sold to consumer products companies with little or no benefit going to the populations from which the information is collected. Some of the algorithms may also include a bias against poor and vulnerable groups (O’Neil 2016) that are difficult to detect given the proprietary nature of the algorithms.

Another set of issues concern whether the ways in which big data are collected and used (for making decisions affecting poor and vulnerable groups) tends to be exclusive (governments and donors use big data to make decisions about programs affecting the poor without consulting them), or whether big data is used to promote inclusion (giving voice to vulnerable groups). These issues are discussed in a recent Rockefeller Foundation blog. There are also many issues around privacy and data security. There is of course no simple answer to these questions, but many of these concerns are often lurking in the background when evaluators are considering the possibility of incorporating big data into their evaluations.

| Table 1. Reasons evaluators have been slow to adopt big data and opportunities for bridge building between evaluators and data analysts |

|

Reason for slow adoption

|

Opportunities for bridge building

|

| 1. Weak institutional linkages |

- Strengthening formal and informal links between data centers and evaluators

|

| 2. Evaluators have limited knowledge about big data and data analytics |

- Capacity development programs covering both big data and conventional evaluation

- Collaborative pilot evaluation projects

|

| 3. Methodological differences |

- Creating opportunities for dialogue to explore differences and to determine how they can be reconciled

- Viewing data analytics and evaluation as being complementary rather than competing

|

| 4. Ethical and political concerns about big data |

- Greater focus on ethical codes of conduct, privacy and data security

- Focusing on making approaches to big data and evaluation inclusive and avoiding exclusive/extractive approaches

|

Building bridges between evaluators and big data/analytics

There are a number of possible steps that could be taken to build bridges between evaluators and big data analysts, and thus to promote the integration of big data into development evaluation. Catherine Cheney (2016) presents interviews with a number of data scientists and development practitioners stressing that data driven development needs both social and computer scientists. No single approach is likely to be successful, and the best approach(es) will depend on each specific context, but we could consider:

- Strengthening the formal and informal linkages between data centers and evaluation offices. It may be possible to achieve this within the existing organizational structure, but it will often require some formal organizational changes in terms of lines of communication. Linda Raftree provides a useful framework for understanding how different “buckets” of data (including among others, traditional data and big data) can be brought together, which suggests one pathway to collaboration between data centers and evaluation offices.

- Identifying opportunities for collaborative pilot projects. A useful starting point may be to identify opportunities for collaboration on pilot projects in order to test/demonstrate the value-added of cooperation between the data analysts and evaluators. The pilots should be carefully selected to ensure that both groups are involved equally in the design of the initiative. Time should be budgeted to promote team-building so that each team can understand the other’s approach.

- Promoting dialogue to explore ways to reconcile differences of approach and methodology between big data and evaluation. While many of these differences may at first appear to be based on fundamental differences of approach, at least some differences result at least in part from questions of terminology and in other cases it may be that different approaches can be applied at different stages of the evaluation process. For example:

-

Many evaluators are suspicious of real-time data from sources such as twitter, or analysis of phone records due to selection bias and issues of data quality. However, evaluators are familiar with exploratory data (collected, for example, during project visits, or feedback from staff), which is then checked more systematically in a follow-up study. When presented in this way, the two teams would be able to discuss in a non-confrontational way, how many kinds of real-time data could be built into evaluation designs.

-

When using Bayesian probability analysis it is necessary to begin with a prior distribution. The probabilities are then updated as more data becomes available. The results of a conventional experimental design can often be used as an input to the definition of the prior distribution. Consequently, it may be possible to consider experimental designs and Bayesian probability analysis as sequential stages of an evaluation rather than as competing approaches.

- Integrated capacity development programs for data analysts and evaluators. These activities would both help develop a broader common methodological framework and serve as an opportunity for team building.

Conclusion

There are a number of factors that together explain the slow take-up of big data and data analytics by development evaluators. A number of promising approaches are proposed for building bridges to overcoming these barriers and to promote the integration of big data into development evaluation.

This blog post previously appeared on MERL Tech and has been re-posted here with permission from the the publisher.

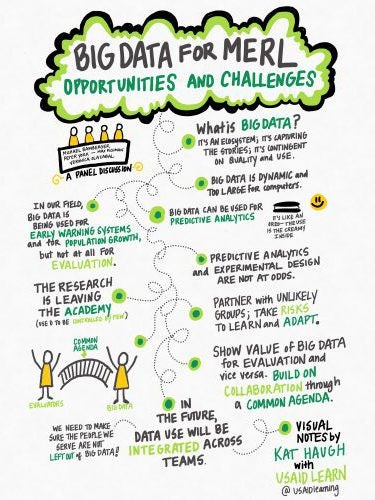

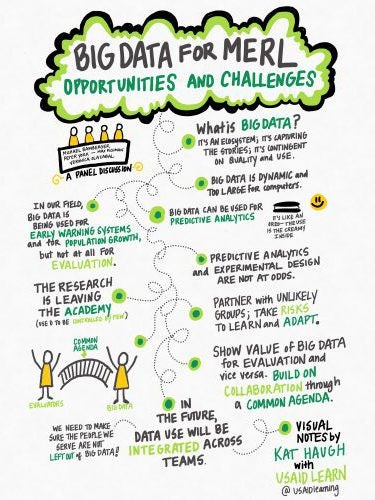

(Image: Big Data session notes from USAIDLearning’s Katherine Haugh [@katherine_haugh}. MERL Tech DC 2016).

Why have evaluators been slow to adopt big data analytics?

Why have evaluators been slow to adopt big data analytics?

Add new comment