Results and Performance of the World Bank Group 2020

Chapter 1 | Introduction

This is the 10th Results and Performance of the World Bank Group (RAP) by the Independent Evaluation Group (IEG). The RAP assesses the World Bank Group’s performance by analyzing the achievement of project and program objectives through validated ratings and by classifying these objectives according to their outcome levels. It also explains key results and performance trends and discusses ways in which the Bank Group can continue to enhance its results measurement systems and outcome orientation. Shifting the focus beyond ratings was partially in response to the Board of Executive Directors’ request for more evidence on development outcomes and outcome orientation. It was also prompted by the recent capital increases to the International Bank for Reconstruction and Development (IBRD) and the International Finance Corporation (IFC), the International Development Association (IDA) Replenishment, and the need to report on a wider range of project and country outcomes from that expanded resource base.

Box 1.1. Key Terms in This Report

Project development objectives: World Bank projects’ stated objectives framed as a positive outcome. In the International Finance Corporation’s new Anticipated Impact Monitoring and Measurement system, project claims and market claims are similar statements of objectives or intended outcomes.

Outcome orientation: a term used when the World Bank Group generates credible evidence on the outcomes from its development interventions and uses this evidence to engage clients and adapt interventions and portfolios to bolster performance

Outcomes: changes in behaviors, conditions, or situations resulting from Bank Group activities. Outcomes include intended, unintended, positive, and negative changes.

Ratings: a measure of projects’ and programs’ success relative to objectives stated at approval or revised subsequently. Different aspects of projects and programs have separate ratings. For World Bank projects, the outcome rating measures how effectively and efficiently the project achieved its relevant objective.

Results: an all-encompassing term that refers to the outputs and outcomes from a development intervention

Results measurement systems: measurement systems that add up ratings and indicators from multiple projects and programs. The Bank Group has different primary results measurement systems for its main business lines, including World Bank, International Finance Corporation, and Multilateral Investment Guarantee Agency projects and country programs. The Bank Group also has different aggregated results measurement systems, such as the Corporate Scorecards and the results measurement systems for International Development Association, gender, and climate change.

Self-evaluation: the formal, empirical assessment of a project, program, or policy written by or for those in charge of the activity

Validation: the Independent Evaluation Group’s independent, critical review of the evidence, results, and assessments from self-evaluations

Source: Independent Evaluation Group

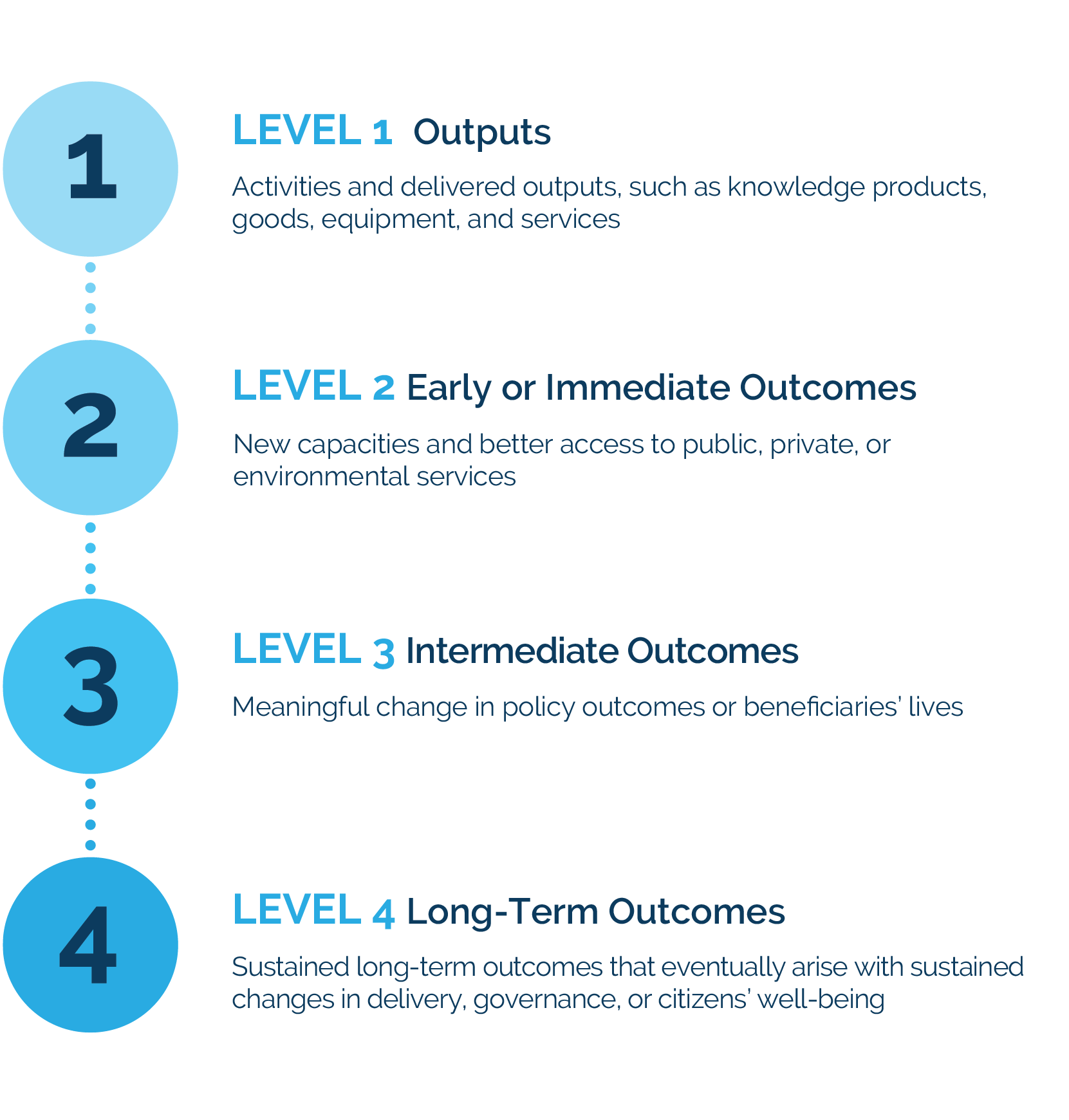

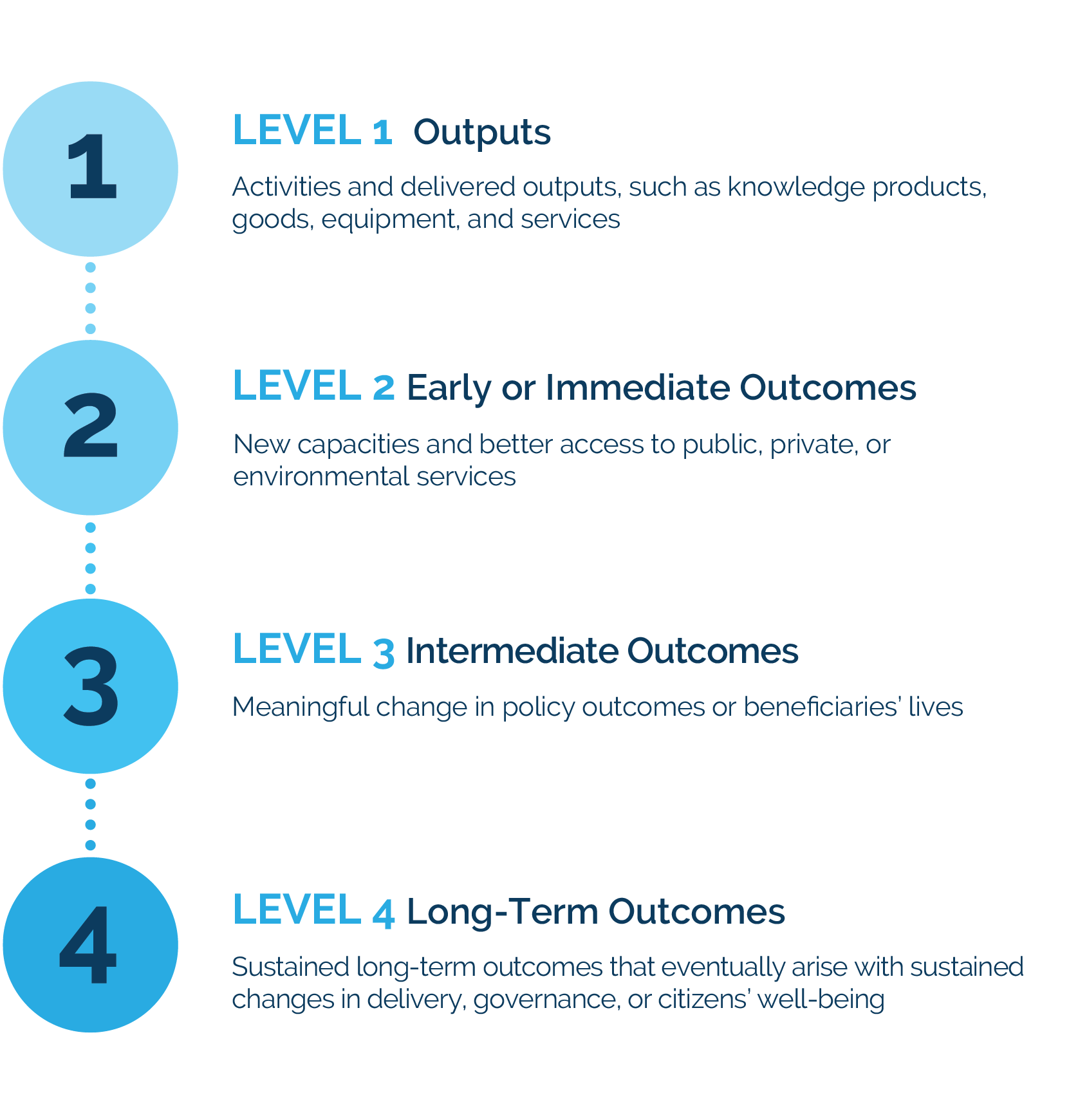

This report examines ratings and outcomes from different perspectives using evidence from the Bank Group’s results measurement systems (see box 1.1 for key terms). Previous RAPs have relied on the project and country program ratings that these systems collect to understand the Bank Group’s results and performance. However, these results measurement systems contain much more evidence and many more indicators beyond project and program ratings. This report breaks with tradition and analyzes this larger evidence base to also describe outcomes and classify outcome levels, particularly for closed and rated World Bank projects and for recently approved World Bank and IFC projects. It also reviews how results measurement systems for select corporate priorities add up results. To do so, the report synthesized sectoral theories of change derived from World Bank and IFC projects, among other sources, to build an outcome classification framework that could classify interventions’ stated objectives along a change pathway. Figure 1.1 defines this framework. In doing so, the report could examine different types and levels of outcomes and how these relate to performance, and assess the line of sight, or connection, between the Bank Group’s results measurement systems and higher-level outcomes.

Figure 1.1. Classification of Outcome Levels

Source: Independent Evaluation Group. Refer to the full methodology in part II.

This report is in two parts. Part I is on performance as assessed through ratings and reports ratings trends for projects and country programs and identifies explanatory factors behind portfolio performance. Part II is on assessing outcome levels and classifies objectives according to their outcome levels, examines links between performance and outcome levels, and discusses results measurement systems’ outcome orientation. The RAP concludes with some key findings and implications for the Bank Group’s coronavirus (COVID-19) pandemic response and its outcome orientation.

- Again, the fiscal year (FY)19 data is preliminary, so these numbers will change as more projects complete their evaluations.

- The World Bank nearly doubled the average size of new projects, from $87 million in FY05–07 to $157 million in FY09–10 (see also World Bank 2012).

- The Country Policy and Institutional Assessment score is an indicator of countries’ policy framework and institutional capacity.

- Two World Bank reports (2016a, 2018b) summarize the evidence, including an internal audit study.

- Unlike the International Finance Corporation (IFC), the World Bank does not have a rating system for its knowledge products. Perception surveys suggest they can be influential. AidData’s survey in Custer and others (2015) was updated in AidData’s 2014 Reform Efforts Survey Aggregate Data Set (2017).

- This is according to Completion and Learning Reviews, the Independent Evaluation Group’s (IEG) reviews of closed country programs.

- World Bank (2018b) summarizes the studies.

- According to a custom calculation that AidData provided to the Results and Performance of the World Bank Group, the average score for World Bank influence was 3.76 among non–fragility, conflict, and violence respondents, compared with 3.28 for respondents in countries that were classified as fragility, conflict, and violence–affected in FY14, the year before the survey. This is based on data documented in Custer and others (2015) and updated in AidData’s 2014 Reform Efforts Survey Aggregate Data Set (2017).

- Mirroring this, econometric research has linked project outcomes to the project team’s access to time, budget, and knowledge (see Ika 2015; and World Bank 2016b, 2017).

- This index is the absolute difference in months between projects’ approval time (time from inception to approval) and projects’ duration (time from effectiveness to project close).

- The score for quality at entry was calculated by converting the 6-point scale into numerical values: highly unsatisfactory = −3, unsatisfactory = −2, moderately unsatisfactory = −1, moderately satisfactory = 1, satisfactory = 2, and highly satisfactory = 3.

- The tentative reversal in IFC’s ratings trend is, however, within the margin of error, given that only a sample of IFC projects undergo ex post evaluation and that not all of the projects sampled for evaluation in the calendar year 2019 cohort have finished their evaluations.

- Although the FY17–19 estimate is based on 171 evaluated projects, the FY19 data are based on only 36 evaluated projects out of 54 projects sampled for evaluation. Estimates will therefore change as more projects finish their evaluations.

- IFC’s managerial actions translate into ratings for the mature portfolio with a long delay. That is because the projects rated this year were approved years before the mentioned actions.

- See World Bank (2019, 19) for a fuller description of IFC’s efforts to improve work quality.

- This is based on all 169 advisory projects evaluated between FY16 and FY18.

- This is based on the IEG’s review of 42 advisory projects evaluated in FY18.

- Of the 13 projects in International Development Association and fragility, conflict, and violence–affected countries evaluated in FY17 and FY18, 10 projects were rated satisfactory or better and 3 projects were rated less than satisfactory. All of the projects fit with the Multilateral Investment Guarantee Agency’s and host countries’ strategic priorities.